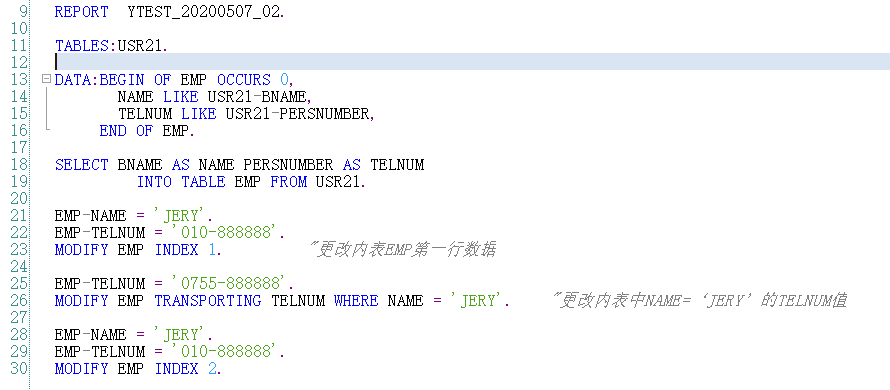

Essentially, as you want to optimize only the 2nd part, the only issues seem to be with itab operations (loop, read, modify), that you may improve by using an index or a or a hash table on the internal table itab. I guess all people gave you all the hints to optimize your code. Now I am running in the background for 1,5 millions records and it continues after 1 hour. Read table itab with key belnr = gwa_belnr_sums-belnr Lv_5per_total = con_5per_tax * gwa_belnr_sums-dmbtr. Loop at git_belnr_sums into gwa_belnr_sums. Move-corresponding itab to gwa_belnr_sums.Ĭollect gwa_belnr_sums into git_belnr_sums. So the declaration of the tables was: DATA : BEGIN OF itab OCCURS 0,Īfter your suggestion I made the following changes: types: begin of ty_belnr_sums,ĭata: git_belnr_sums type sorted table of ty_belnr_sums In the 2nd loop I compare the sum per belnr with the amount of the belnr/account and do what the code says.ġst of all the initial code existed and I added the new one. In the 2nd part in the 1st loop I summ the amounts per belnr for the accounts that start with 73. Modify itab transporting budat where belnr = itab2-belnr.ĭoes anyone have an idea on how to fix the 2nd part?Īfter the 1st process ITAB has 1,5 million records. If itab-dmbtr between lv_5per_lower and lv_5per_upper. Read table itab with key belnr = itab2-belnr Lv_5per_total = con_5per_tax * itab2-dmbtr. The 2nd part which is doing 13,5 hours is the following: sort itab by belnr. Select * from bseg into corresponding fields of itab

Both of them process 1,5 million records, but the first part is taking 20 minutes and the 2nd part is taking 13,5 hours!!!!!! This lazy copy strategy means that changes to tables, strings, or boxed components can result in surprising jumps in memory consumption, as seen in the Memory Inspector.I have 2 parts of code. Only when a table or string is changed via a variable does the ABAP runtime make a separate copy of the changed object. The initial sharing/revocation of initial sharing in static boxed components is analogous in its effect.Īs long as the component remains unchanged, ABAP lets all variables that refer to the table or string point to a single memory object. Searching the documentation I found the following explanation:īecause internal tables and strings can become quite large, ABAP saves copying workload by employing a lazy copy strategy (Copy-On-Write). My first test was exactly this one, moving all content using lt_ekko_aux = lt_ekko, that didn't work. Result: starting the garbage collector after the DELETE statement doesn’t release memory, in fact it increase the memory used a little bit more… Updated with a new example with the suggestion to call the garbage collector manually. Lo_dyntable_typ = cl_abap_tabledescr=>create( p_line_type = lo_struct_typ ).ĬREATE DATA: lt_dyntable TYPE HANDLE lo_dyntable_typ, Lo_struct_typ ?= cl_abap_typedescr=>describe_by_data( ). Lo_dyntable_typ TYPE REF TO cl_abap_tabledescr, Method Definition METHODS: release_memory CHANGING ch_t_tab TYPE STANDARD TABLE.ĭATA: lo_struct_typ TYPE REF TO cl_abap_structdescr, I would like to know from the community if you have already noticed this behavior of the ABAP garbage collector.īelow is the source code of a method to release the memory allocated to an internal table (that is intended to be used after a delete of a reasonable number of rows). So I decided to investigate it, and that’s why I’m here, writing this blog.Īfter a few tests I found a way to release the memory, by transfering the remaining rows to a auxiliary internal table, clearing the original internal table and transfering the content back to the original.Īs expected, at first we have an increase in the memory useīut after clearing the original table the memory use is reduced dramatically Let me show you an example that i created for this blog.Īs we can see, the memory used increased after the DELETE (note that the number of rows was reduced dramatically). But unfortunately I was completely wrong. I never had the curiosity of analize the memory use behavior of program after a DELETE statement because I really believed that the DELETE statement and memory release were like synonyms. After a DELETE itab statement where 60% of the rows were deleted, instead of decreasing the memory use what happened was an increase of the memory use. While debugging the program with the Memory Analysis tool active, I found something that intrigues me. This week I had a problem regarding memory use in an ABAP program. Thank Randolf Eilenberger for alerting me about this (see comments). Note: a more suitable name for this blog should be “DELETE itab statement and memory use” or “DELETE itab statement and memory deallocation”, because the Garbage Collector is not responsible for internal tables memory allocation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed